In most walks of life, AI’s presence can already be felt. In healthcare, the benefits are quite frankly mind-boggling; AI-powered platforms are unlocking new levels of efficiency and precision across medical practices.

In a recent report by the World Economic Forum (WEF), AI software was cited as twice as accurate as professionals at analysing brain scans of stroke patients. Alongside its accuracy, the AI model also identified the timescale within which the stroke happened —crucial information for professionals.

In administration, the gains are also tremendous, particularly for the world’s overworked healthcare professionals. Dragon Copilot, for example, is an AI healthcare tool that can listen to and create notes on clinical consultations. In Germany, an AI platform called Elea has cut testing and diagnosis times from weeks to hours.

However, to gain the absolutely most from AI in healthcare, the resultant infrastructure, be it power reliability, cooling efficiency, and data centre design, must be unwavering in healthcare’s efforts to reach the proverbial next frontier in patient care.

Localised AI in healthcare

The evolution of AI deployment in healthcare mirrors the earlier trajectory of cloud computing. Initially, there was a rush to centralise workloads; however, over time, the healthcare industry has recognised that not all applications benefit from this model.

For example, latency-sensitive applications like diagnostic imaging or real-time patient monitoring require immediate processing. The result is a growing shift towards edge AI, where data is processed closer to the point of care rather than in distant data centres.

This trend is especially relevant in Africa, where inconsistent network connectivity can limit reliance on centralised systems. Here, localised compute enables healthcare facilities to maintain control over critical operations, ensuring faster turnaround times and greater resilience in the face of connectivity or power disruptions.

Reliable power is absolute

The uptime of healthcare infrastructure is non-negotiable. For example, a Tier IV data centre with 99.99% uptime and full fault tolerance represents the gold standard, ensuring uninterrupted care even in the face of multiple failures.

AI platforms rely on continuous data processing, and even brief interruptions can compromise diagnostics, delay treatment, or disrupt critical workflows. This makes reliable operations, driven by steadfast power infrastructure, set in stone.

Victoria Fakiya – Senior Writer

Techpoint Digest

Stop struggling to find your tech career path

Discover in-demand tech skills and build a standout portfolio in this FREE 5-day email course

Again, localised data centres, particularly those deployed at the edge, offer healthcare providers a feasible alternative and greater control over their power environments. Instead of relying on distant facilities, hospitals can implement tailored solutions that address their specific challenges, such as backup power systems, redundancy, or real-time monitoring.

Cooling for scale

It is well known that AI workloads require copious amounts of processing and, in turn, substantial cooling. Indeed, high-performance computing (HPC) generates significant heat, and without proper thermal management, system reliability and lifespan can be compromised.

However, the cooling requirements for sectors differ, and in the case of healthcare, it is shaped by the specific workloads being supported:

- Air cooling remains sufficient for many edge deployments with moderate compute requirements

- Hybrid models -combining air cooling with targeted liquid cooling-are increasingly common for mixed workloads

- High-density cooling solutions are reserved for specialised applications requiring intensive processing power

The benefit is that healthcare providers can make the most of this flexibility by allocating investment to the operations that require the most cooling and deploying it on a specific-use-case basis.

No one-size-fits-all approach

Perhaps the most important takeaway is that there is no universal blueprint for AI infrastructure in healthcare.

Each facility operates within a unique context defined by its legacy systems, clinical priorities, physical space, and local infrastructure constraints. As a result, successful AI adoption requires a highly tailored approach.

In many parts of Africa, this has led to growing interest in prefabricated modular data centres, which offer a practical alternative to traditional builds, allowing healthcare providers to deploy scalable, self-contained environments that can be customised to their needs.

Also, prefabrication simplifies deployment, reduces time-to-value, and enables facilities to scale incrementally as demand grows. It also provides the flexibility to balance edge and cloud strategies—keeping critical workloads local while leveraging the cloud for less time-sensitive processing and analysis.

Ultimately, as AI continues to transform healthcare, its success will depend not only on technological innovation but also on the strength of the infrastructure that supports it.

For healthcare providers, the opportunities lie in aligning infrastructure investments with clinical goals. In doing so, they can unlock the full potential of AI, potentially changing the way we view healthcare today.

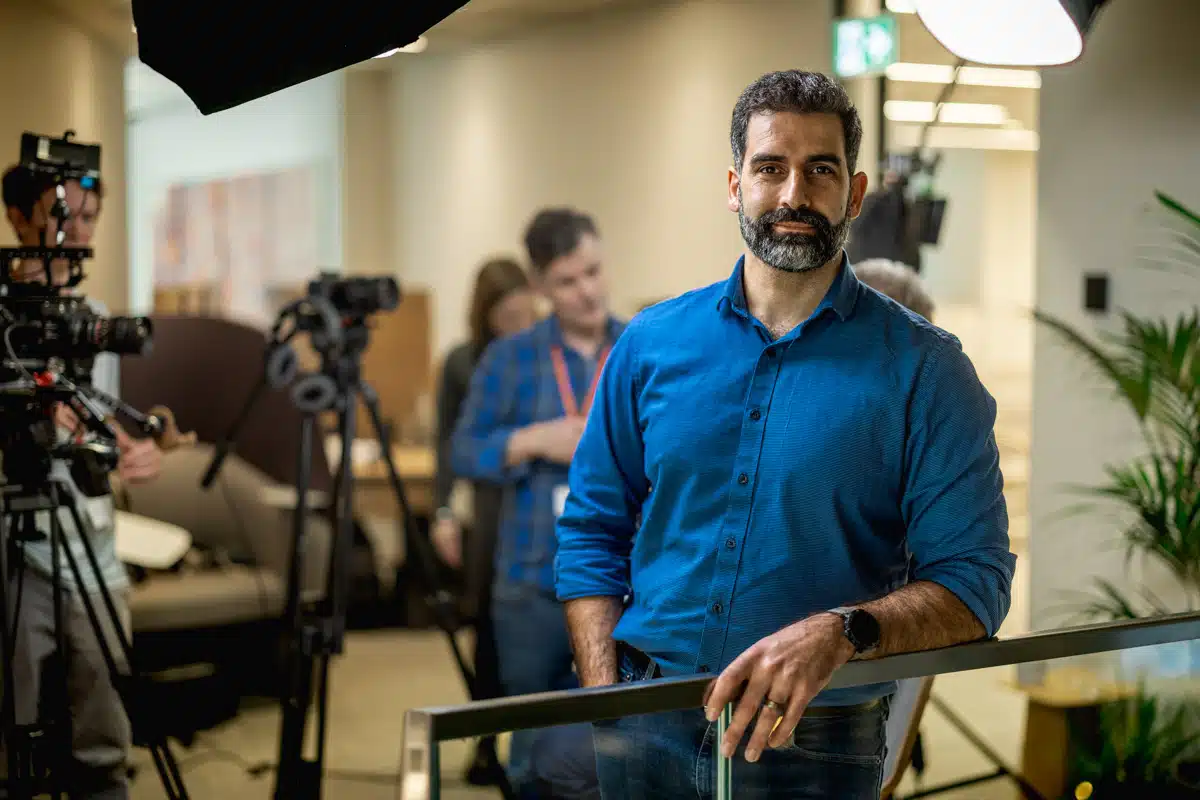

About the Author

Steven Santini is the Vice President, Secure Power, Sub-Saharan Africa at Schneider Electric, bringing more than 15 years of experience in IT infrastructure, channel leadership, and strategic alliances. His expertise spans building solution-driven ecosystems that bridge IT and OT technologies.